We need to stop pretending AI is intelligent

-

I think most people tend to overlook the most obvious advantages and are overly focused on what is supposed to be and marketed as.

No need to think how to feed a thing into google to get a decent starting point for reading. No finding the correct terminology before finding the thing you are looking for. Just ask like you would ask a knowledgeable individual and you get an overview of what you wanted to ask in the first place.

Discuss a little to get the options and then start reading and researching the everliving shit out of them to confirm all the details.

Agreed.

When I was a kid we went to the library. If a card catalog didn't yield the book you needed, you asked the librarian. They often helped. No one sat around after the library wondering if the librarian was "truly intelligent".

These are tools. Tools slowly get better. Is a tool make life easier or your work better, you'll eventually use it.

Yes, there are woodworkers that eschew power tools but they are not typical. They have a niche market, and that's great, but it's a choice for the maker and user of their work.

-

Steve Gibson on his podcast, Security Now!, recently suggested that we should call it "Simulated Intelligence". I tend to agree.

I’ve taken to calling it Automated Inference

-

Good luck. Even David Attenborrough can't help but anthropomorphize. People will feel sorry for a picture of a dot separated from a cluster of other dots.

The play by AI companies is that it's human nature for us to want to give just about every damn thing human qualities.

I'd explain more but as I write this my smoke alarm is beeping a low battery warning, and I need to go put the poor dear out of its misery.This is the current problem with "misalignment". It's a real issue, but it's not "AI lying to prevent itself from being shut off" as a lot of articles tend to anthropomorphize it. The issue is (generally speaking) it's trying to maximize a numerical reward by providing responses to people that they find satisfactory. A legion of tech CEOs are flogging the algorithm to do just that, and as we all know, most people don't actually want to hear the truth. They want to hear what they want to hear.

LLMs are a poor stand in for actual AI, but they are at least proficient at the actual thing they are doing. Which leads us to things like this, https://www.youtube.com/watch?v=zKCynxiV_8I

-

Steve Gibson on his podcast, Security Now!, recently suggested that we should call it "Simulated Intelligence". I tend to agree.

Pseudo-intelligence

-

Super duper shortsighted article.

I mean, sure, some points are valid. But there's not just programmers involved, other professions such as psychologists and Philosophers and artists, doctors etc. too.

And I agree AGI probably won't emerge from binary systems. However... There's quantum computing on the rise. Latest theories of the mind and consciousness discuss how consciousness and our minds in general also appear to work with quantum states.

Finally, if biofeedback would be the deciding factor.. That can be simulated, modeled after a sample of humans.

The article is just doomsday hoo ha, unbalanced.

Show both sides of the coin...

Honestly I don't think we'll have AGI until we can fully merge meat space and cyber space. Once we can simply plug our brains into a computer and fully interact with it then we may see AGI.

Obviously we're not where near that level of man machine integration, I doubt we'll see even the slightest chance of it being possible for at least 10 years and the very earliest. But when we do get there it's a distinct chance that it's more of a Borg situation where the computer takes a parasitic role than a symbiotic role.

But by the time we are able to fully integrate computers into our brains I believe we will have trained A.I. systems enough to learn by interaction and observation. So being plugged directly into the human brain it could take prior knowledge of genome mapping and other related tasks and apply them to mapping our brains and possibly growing artificial brains to achieve self awareness and independent thought.

Or we'll just nuke ourselves out of existence and that will be that.

-

Steve Gibson on his podcast, Security Now!, recently suggested that we should call it "Simulated Intelligence". I tend to agree.

reminds me of Mass Effect's VI, "virtual intelligence": a system that's specifically designed to be not truly intelligent, as AI systems are banned throughout the galaxy for its potential to go rogue.

-

We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

That headline is a straw man, and the article really argues on General AI, which also has consciousness.

The current state of AI is definitely intelligent, but it's not GAI.

Bullshit headline. -

No it’s really not at all the same. Humans don’t think according to the probabilities of what is the likely best next word.

No you think according to the chemical proteins floating around your head. You don't even know he decisions your making when you make them.

You're a meat based copy machine with a built in justification box.

-

Steve Gibson on his podcast, Security Now!, recently suggested that we should call it "Simulated Intelligence". I tend to agree.

I love that. It makes me want to take it a step further and just call it "imitation intelligence."

-

People who don't like "AI" should check out the newsletter and / or podcast of Ed Zitron. He goes hard on the topic.

Citation Needed (by Molly White) also frequently bashes AI.

I like her stuff because, no matter how you feel about crypto, AI, or other big tech, you can never fault her reporting. She steers clear of any subjective accusations or prognostication.

It’s all “ABC person claimed XYZ thing on such and such date, and then 24 hours later submitted a report to the FTC claiming the exact opposite. They later bought $5 million worth of Trumpcoin, and two weeks later the FTC announced they were dropping the lawsuit.”

-

How is outputting things based on things it has learned any different to what humans do?

Humans are not probabilistic, predictive chat models. If you think reasoning is taking a series of inputs, and then echoing the most common of those as output then you mustn't reason well or often.

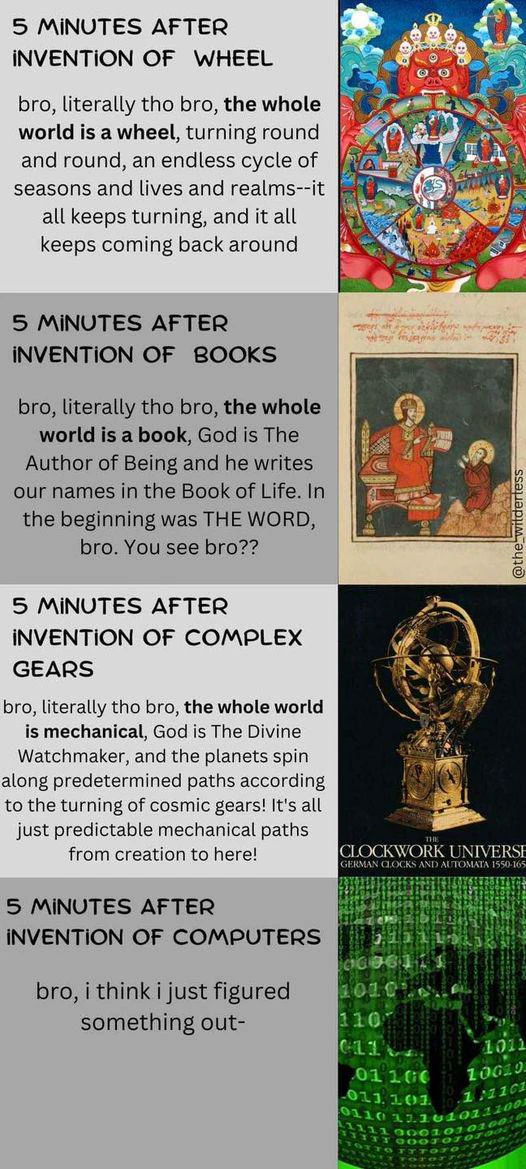

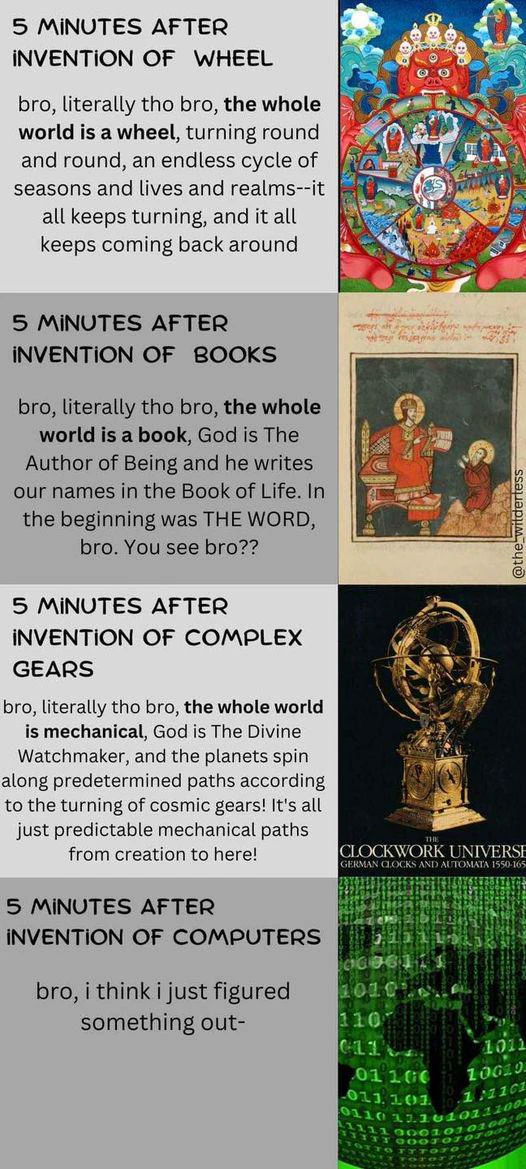

If you were born during the first industrial revolution, then you'd think the mind was a complicated machine. People seem to always anthropomorphize inventions of the era.

If you were born during the first industrial revolution, then you'd think the mind was a complicated machine. People seem to always anthropomorphize inventions of the era.

-

I love that. It makes me want to take it a step further and just call it "imitation intelligence."

If only there were a word, literally defined as:

Made by humans, especially in imitation of something natural.

-

That headline is a straw man, and the article really argues on General AI, which also has consciousness.

The current state of AI is definitely intelligent, but it's not GAI.

Bullshit headline.wrote on last edited by [email protected]I think you're misunderstanding the point the author is making. He is arguing that even the current state is not intelligent, it is merely a fancy autocorrect, it doesn't know or understand anything about the prompts it receives. As the author stated, it can only guess at the next statistically most likely piece of information based on the data that has been fed into it. That's not intelligence.

-

That headline is a straw man, and the article really argues on General AI, which also has consciousness.

The current state of AI is definitely intelligent, but it's not GAI.

Bullshit headline.wrote on last edited by [email protected]Todays AI is clippy on steroids. It's not intelligent or creative. You can't feed it physics and astronomy books without the equation for C and tell it to create the equation for C. It's fancy autocorrect, and it's a waste of compute and energy.

-

We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

And yet, paradoxically, it is far more intelligent than those people who think it is intelligent.

-

If only there were a word, literally defined as:

Made by humans, especially in imitation of something natural.

throws hands up At least we tried.

-

We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

wrote on last edited by [email protected]The thing is, ai is compression of intelligence but not intelligence itself. That's the part that confuses people.

Ai is the ability to put anything describable into a compressed zip. -

If you were born during the first industrial revolution, then you'd think the mind was a complicated machine. People seem to always anthropomorphize inventions of the era.

This is great

-

We are constantly fed a version of AI that looks, sounds and acts suspiciously like us. It speaks in polished sentences, mimics emotions, expresses curiosity, claims to feel compassion, even dabbles in what it calls creativity.

But what we call AI today is nothing more than a statistical machine: a digital parrot regurgitating patterns mined from oceans of human data (the situation hasn’t changed much since it was discussed here five years ago). When it writes an answer to a question, it literally just guesses which letter and word will come next in a sequence – based on the data it’s been trained on.

This means AI has no understanding. No consciousness. No knowledge in any real, human sense. Just pure probability-driven, engineered brilliance — nothing more, and nothing less.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure. It doesn’t hunger, desire or fear. And because there is no cognition — not a shred — there’s a fundamental gap between the data it consumes (data born out of human feelings and experience) and what it can do with them.

Philosopher David Chalmers calls the mysterious mechanism underlying the relationship between our physical body and consciousness the “hard problem of consciousness”. Eminent scientists have recently hypothesised that consciousness actually emerges from the integration of internal, mental states with sensory representations (such as changes in heart rate, sweating and much more).

Given the paramount importance of the human senses and emotion for consciousness to “happen”, there is a profound and probably irreconcilable disconnect between general AI, the machine, and consciousness, a human phenomenon.

wrote on last edited by [email protected]Another article written by a person who doesn't realize that human intelligence is 100% about predicting sequences of things (including words), and therefore has only the most nebulous idea of how to tell the difference between an LLM and a person.

The result is a lot of uninformed flailing and some pithy statements. You can predict how the article is going to go just from the headline because it's the same article you already read countless times.

So why is a real “thinking” AI likely impossible? Because it’s bodiless. It has no senses, no flesh, no nerves, no pain, no pleasure.

May as well have written "Durrrrrrrrrrrrrrr brghlgbhfblrghl." It didn't even occur to the author to ask, "what is thinking? what is reasoning?" The point was to write another junk article to get ad views. There is nothing of substance in it.

-

Citation Needed (by Molly White) also frequently bashes AI.

I like her stuff because, no matter how you feel about crypto, AI, or other big tech, you can never fault her reporting. She steers clear of any subjective accusations or prognostication.

It’s all “ABC person claimed XYZ thing on such and such date, and then 24 hours later submitted a report to the FTC claiming the exact opposite. They later bought $5 million worth of Trumpcoin, and two weeks later the FTC announced they were dropping the lawsuit.”

I'm subscribed to her Web3 is Going Great RSS. She coded the website in straight HTML, according to a podcast that I listen to. She's great.

I didn't know she had a podcast. I just added it to my backup playlist. If it's as good as I hope it is, it'll get moved to the primary playlist. Thanks!