Something Bizarre Is Happening to People Who Use ChatGPT a Lot

-

This post did not contain any content.

I knew a guy I went to rehab with. Talked to him a while back and he invited me to his discord server. It was him, and like three self trained LLMs and a bunch of inactive people who he had invited like me. He would hold conversations with the LLMs like they had anything interesting or human to say, which they didn't. Honestly a very disgusting image, I left because I figured he was on the shit again and had lost it and didn't want to get dragged into anything.

-

What the fuck is vibe coding... Whatever it is I hate it already.

Andrej Karpathy (One of the founders of OpenAI, left OpenAI, worked for Tesla back in 2015-2017, worked for OpenAI a bit more, and is now working on his startup "Eureka Labs - we are building a new kind of school that is AI native") make a tweet defining the term:

There's a new kind of coding I call "vibe coding", where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It's possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper so I barely even touch the keyboard. I ask for the dumbest things like "decrease the padding on the sidebar by half" because I'm too lazy to find it. I "Accept All" always, I don't read the diffs anymore. When I get error messages I just copy paste them in with no comment, usually that fixes it. The code grows beyond my usual comprehension, I'd have to really read through it for a while. Sometimes the LLMs can't fix a bug so I just work around it or ask for random changes until it goes away. It's not too bad for throwaway weekend projects, but still quite amusing. I'm building a project or webapp, but it's not really coding - I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.

People ignore the "It's not too bad for throwaway weekend projects", and try to use this style of coding to create "production-grade" code... Lets just say it's not going well.

source (xcancel link)

-

Jfc, I didn't even know who Grummz was until yesterday but gawdamn that is some nuclear cringe.

Something worth knowing about that guy?

I mean, apart from the fact that he seems to be a complete idiot?

Midwits shouldn't have been allowed on the Internet.

-

people tend to become dependent upon AI chatbots when their personal lives are lacking. In other words, the neediest people are developing the deepest parasocial relationship with AI

Preying on the vulnerable is a feature, not a bug.

That was clear from GPT-3, day 1.

I read a Reddit post about a woman who used GPT-3 to effectively replace her husband, who had passed on not too long before that. She used it as a way to grief, I suppose? She ended up noticing that she was getting too attach to it, and had to leave him behind a second time...

-

If you are dating a body pillow, I think that's a pretty good sign that you have taken a wrong turn in life.

What if it's either that, or suicide? I imagine that people who make that choice don't have a lot of choice. Due to monetary, physical, or mental issues that they cannot make another choice.

-

Thanks ill look into it!

Presuming you're writing in Python: Check out https://docs.astral.sh/ruff/

It's an all-in-one tool that combines several older (pre-existing) tools. Very fast, very cool.

-

This post did not contain any content.

TIL becoming dependent on a tool you frequently use is "something bizarre" - not the ordinary, unsurprising result you would expect with common sense.

-

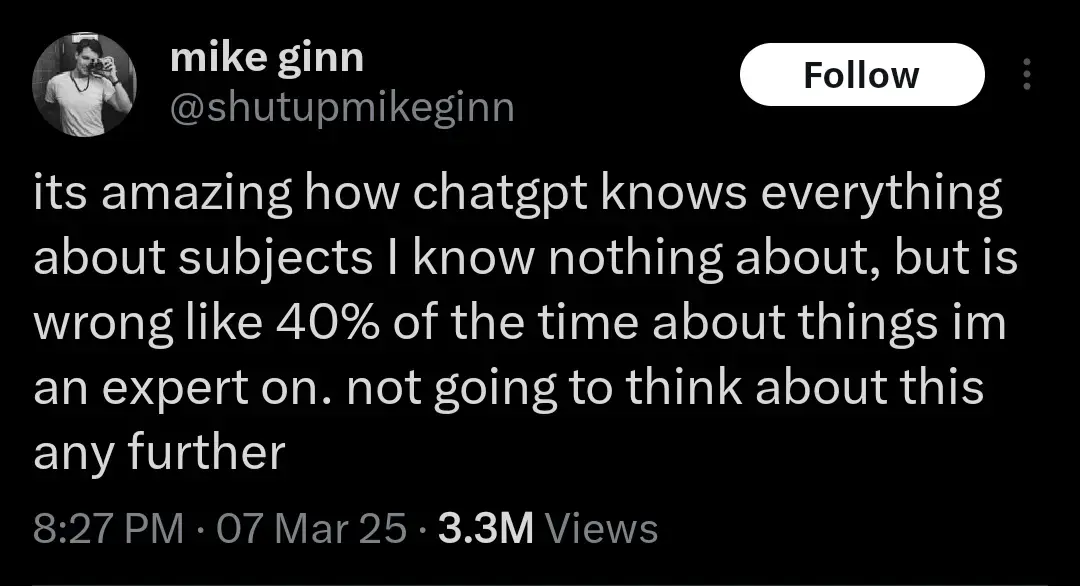

Another realization might be that the humans whose output ChatGPT was trained on were probably already 40% wrong about everything. But let's not think about that either. AI Bad!

-

I can see how people would seek refuge talking to an AI given that a lot of online forums have really inflammatory users; it is one of the biggest downfalls of online interactions. I have had similar thoughts myself - without knowing me strangers could see something I write as hostile or cold, but it's really more often friends that turn blind to what I'm saying and project a tone that is likely not there to begin with. They used to not do that, but in the past year or so it's gotten to the point where I frankly just don't participate in our group chats and really only talk if it's one-one text or in person. I feel like I'm walking on eggshells, even if I were to show genuine interest in the conversation it is taken the wrong way. That being said, I think we're coming from opposite ends of a shared experience but are seeing the same thing, we're just viewing it differently because of what we have experienced individually. This gives me more to think about!

I feel a lot of similarities in your last point, especially with having friends who have wildly different interests. Most of mine don't care to even reach out to me beyond a few things here and there; they don't ask follow-up questions and they're certainly not interested when I do speak. To share what I'm seeing, my friends are using these LLM's to an extent where if I am not responding in the same manner or structure it's either ignored or I'm told I'm not providing the appropriate response they wanted. This where the tone comes in where I'm at, because ChatGPT will still have a regarded tone of sorts to the user; that is it's calm, non-judgmental, and friendly. With that, the people in my friend group that do heavily use it have appeared to become more sensitive to even how others like me in the group talk, to the point where they take it upon themselves to correct my speech because the cadence, tone and/or structure is not fitting a blind expectation I wouldn't know about. I find it concerning, because regardless of the people who are intentionally mean, and for interpersonal relationships, it's creating an expectation that can't be achieved with being human. We have emotions and conversation patterns that vary and we're not always predictable in what we say, which can suck when you want someone to be interested in you and have meaningful conversations but it doesn't tend to pan out. And I feel that. A lot unfortunately. AKA I just wish my friends cared sometimes

I'm getting the sense here that you're placing most - if not all - of the blame on LLMs, but that’s probably not what you actually think. I'm sure you'd agree there are other factors at play too, right? One theory that comes to mind is that the people you're describing probably spend a lot of time debating online and are constantly exposed to bad-faith arguments, personal attacks, people talking past each other, and dunking - basically everything we established is wrong with social media discourse. As a result, they've developed a really low tolerance for it, and the moment someone starts making noises sounding even remotely like those negative encounters, they automatically label them as “one of them” and switch into lawyer mode - defending their worldview against claims that aren’t even being made.

That said, since we're talking about your friends and not just some random person online, I think an even more likely explanation is that you’ve simply grown apart. When people close to you start talking to you in the way you described, it often means they just don’t care the way they used to. Of course, it’s also possible that you’re coming across as kind of a prick and they’re reacting to that - but I’m not sensing any of that here, so I doubt that’s the case.

I don’t know what else you’ve been up to over the past few years, but I’m wondering if you’ve been on some kind of personal development journey - because I definitely have, and I’m not the same person I was when I met my friends either. A lot of the things they may have liked about me back then have since changed, and maybe they like me less now because of it. But guess what? I like me more. If the choice is to either keep moving forward and risk losing some friends, or regress just to keep them around, then I’ll take being alone. Chris Williamson calls this the "Lonely Chapter" - you’re different enough that you no longer fit in with your old group, but not yet far enough along to have found the new one.

-

What the fuck is vibe coding... Whatever it is I hate it already.

Its when you give the wheel to someone less qualified than Jesus: Generative AI

-

I knew a guy I went to rehab with. Talked to him a while back and he invited me to his discord server. It was him, and like three self trained LLMs and a bunch of inactive people who he had invited like me. He would hold conversations with the LLMs like they had anything interesting or human to say, which they didn't. Honestly a very disgusting image, I left because I figured he was on the shit again and had lost it and didn't want to get dragged into anything.

Jesus that's sad

-

What if it's either that, or suicide? I imagine that people who make that choice don't have a lot of choice. Due to monetary, physical, or mental issues that they cannot make another choice.

I'm confused. If someone is in a place where they are choosing between dating a body pillow and suicide, then they have DEFINITELY made a wrong turn somewhere. They need some kind of assistance, and I hope they can get what they need, no matter what they choose.

I think my statement about "a wrong turn in life" is being interpreted too strongly; it wasn't intended to be such a strong and absolute statement of failure. Someone who's taken a wrong turn has simply made a mistake. It could be minor, it could be serious. I'm not saying their life is worthless. I've made a TON of wrong turns myself.

-

TIL becoming dependent on a tool you frequently use is "something bizarre" - not the ordinary, unsurprising result you would expect with common sense.

If you actually read the article Im 0retty sure the bizzarre thing is really these people using a 'tool' forming a roxic parasocial relationship with it, becoming addicted and beginning to see it as a 'friend'.

-

This post did not contain any content.

now replace chatgpt with these terms, one by one:

- the internet

- tiktok

- lemmy

- their cell phone

- news media

- television

- radio

- podcasts

- junk food

- money

-

If you actually read the article Im 0retty sure the bizzarre thing is really these people using a 'tool' forming a roxic parasocial relationship with it, becoming addicted and beginning to see it as a 'friend'.

No, I basically get the same read as OP. Idk I like to think I'm rational enough & don't take things too far, but I like my car. I like my tools, people just get attached to things we like.

Give it an almost human, almost friend type interaction & yes I'm not surprised at all some people, particularly power users, are developing parasocial attachments or addiction to this non-human tool. I don't call my friends. I text. ¯\(°_o)/¯

-

If you actually read the article Im 0retty sure the bizzarre thing is really these people using a 'tool' forming a roxic parasocial relationship with it, becoming addicted and beginning to see it as a 'friend'.

Yes, it says the neediest people are doing that, not simply "people who who use ChatGTP a lot". This article is like "Scientists warn civilization-killer asteroid could hit Earth" and the article clarifies that there's a 0.3% chance of impact.

-

No, I basically get the same read as OP. Idk I like to think I'm rational enough & don't take things too far, but I like my car. I like my tools, people just get attached to things we like.

Give it an almost human, almost friend type interaction & yes I'm not surprised at all some people, particularly power users, are developing parasocial attachments or addiction to this non-human tool. I don't call my friends. I text. ¯\(°_o)/¯

I loved my car. Just had to scrap it recently. I got sad. I didnt go through withdrawal symptoms or feel like i was mourning a friend. You can appreciate something without building an emotional dependence on it. Im not particularly surprised this is happening to some people either, wspecially with the amount of brainrot out there surrounding these LLMs, so maybe bizarre is the wrong word , but it is a little disturbing that people are getting so attached to so.ething that is so fundamentally flawed.

-

Another realization might be that the humans whose output ChatGPT was trained on were probably already 40% wrong about everything. But let's not think about that either. AI Bad!

I'll bait. Let's think:

-there are three humans who are 98% right about what they say, and where they know they might be wrong, they indicate it

-

now there is an llm (fuck capitalization, I hate the ways they are shoved everywhere that much) trained on their output

-

now llm is asked about the topic and computes the answer string

By definition that answer string can contain all the probably-wrong things without proper indicators ("might", "under such and such circumstances" etc)

If you want to say 40% wrong llm means 40% wrong sources, prove me wrong

-

-

Jesus that's sad

Yeah. I tried talking to him about his AI use but I realized there was no point. He also mentioned he had tried RCs again and I was like alright you know you can't handle that but fine.. I know from experience you can't convince addicts they are addicted to anything. People need to realize that themselves.

-

I don’t know how people can be so easily taken in by a system that has been proven to be wrong about so many things. I got an AI search response just yesterday that dramatically understated an issue by citing an unscientific ideologically based website with high interest and reason to minimize said issue. The actual studies showed a 6x difference. It was blatant AF, and I can’t understand why anyone would rely on such a system for reliable, objective information or responses. I have noted several incorrect AI responses to queries, and people mindlessly citing said response without verifying the data or its source. People gonna get stupider, faster.

I don’t know how people can be so easily taken in by a system that has been proven to be wrong about so many things

Ahem. Weren't there an election recently, in some big country, with uncanny similitude with that?