Will CEOs eventually have to replace themselves with AI to please shareholders?

-

Yeah a lot of it is messy, but they are not being replicated by commodity gpus.

LLMs have no intelligence. They are just exceedingly well at language, which has a lot of human knowledge in it. Just read claudes system prompt and tell me it's still smart, when it needs to be told 4 separate times to avoid copyright.

LLMs have no intelligence. They are just exceedingly well at language, which has a lot of human knowledge in it.

Hm... two bucks... and it only transports matter? Hm...

It's amazing how quickly people dismiss technological capabilities as mundane that would have been miraculous just a few years earlier.

-

I did not immediately dismiss LLM, my thoughts come from experience, observing the pace of improvement, and investigating how and why LLMs work.

They do not think, they simply execute an algorithm. Yeah that algorithm is exceedingly large and complicated, but there's still no thought, there's no learning outside of training. Unlike humans who are always learning, even if they don't look like it, and our brains are constantly rewiring themselves, LLMs don't.

I'm certain in the future we will get true AI, but it's not here yet.

-

United Healthcare CEO Brian Thompson was utilizing AI technology to mass murder people for shareholder profit

And the AI being bad at its job was a feature.

-

If AI ends up running companies better than people, won’t shareholders demand the switch? A board isn’t paying a CEO $20 million a year for tradition, they’re paying for results. If an AI can do the job cheaper and get better returns, investors will force it.

And since corporations are already treated as “people” under the law, replacing a human CEO with an AI isn’t just swapping a worker for a machine, it’s one “person” handing control to another.

That means CEOs would eventually have to replace themselves, not because they want to, but because the system leaves them no choice. And AI would be considered a "person" under the law.

Sadly don't think this is going to happen. A good CEO doesn't make calculated decisions based on facts and judge risk against profit. If he did, he would, at best, be a normal CEO. Who wants that? No, a truly great CEO does exactly what a truly bad CEO does; he takes risks that aren't proportional to the reward (and gets lucky)!

This is the only way to beat the game, just like with investments or roulette. There are no rich great roulette players going by the odds. Only lucky.

Sure, with CEOs, this is on the aggregate. I'm sure there is a genius here and a Renaissance man there... But on the whole, best advice is "get risky and get lucky". Try it out. I highly recommend it. No one remembers a loser. And the story continues.

-

This is closer to what I mean by strategy and decisions: https://matthewdwhite.medium.com/i-think-therefore-i-am-no-llms-cannot-reason-a89e9b00754f

LLMs can be helpful for informing strategy, and simulating strings of words that may can be perceived as a strategic choice, but it doesn't have it's own goal-oriented vision.

Oh sorry I was referring to CEOs

-

That will be a whole shitload of money for the shareholders

This makes more sense. Dammit!

-

If AI ends up running companies better than people, won’t shareholders demand the switch? A board isn’t paying a CEO $20 million a year for tradition, they’re paying for results. If an AI can do the job cheaper and get better returns, investors will force it.

And since corporations are already treated as “people” under the law, replacing a human CEO with an AI isn’t just swapping a worker for a machine, it’s one “person” handing control to another.

That means CEOs would eventually have to replace themselves, not because they want to, but because the system leaves them no choice. And AI would be considered a "person" under the law.

Wasn't it Willy Shakespeare who said "First, kill all the Shareholders" ? That easily manipulated stock market only truly functions for the wealthy, regardless of harm inflicted on both humans and the environment they exist in.

-

Sadly don't think this is going to happen. A good CEO doesn't make calculated decisions based on facts and judge risk against profit. If he did, he would, at best, be a normal CEO. Who wants that? No, a truly great CEO does exactly what a truly bad CEO does; he takes risks that aren't proportional to the reward (and gets lucky)!

This is the only way to beat the game, just like with investments or roulette. There are no rich great roulette players going by the odds. Only lucky.

Sure, with CEOs, this is on the aggregate. I'm sure there is a genius here and a Renaissance man there... But on the whole, best advice is "get risky and get lucky". Try it out. I highly recommend it. No one remembers a loser. And the story continues.

Well you will be happy to hear that AI does make calculated risks but they are not based on reality so they are in fact - risks.

You can't just type "Please do not hallucinate. Do not make judgement calls based on fake news"

-

I could imagine a world where whole virtual organizations could be spun up, and they can just run in the background creating whole products, marketing them, and doing customer support, etc.

Right now the technology doesn't seem there yet, but it has been rapidly improving, so we'll see.

I could definitely see rich CEOs funding the creation of a "celebrity" bot that answers questions the way they do. Maybe with their likeness and voice, so they can keep running companies from beyond the grave. Throw it in one of those humanoid robots and they can keep preaching the company mission until the sun burns out.

What a nightmare.

I could imagine a world where whole virtual organizations could be spun up, and they can just run in the background creating whole products, marketing them, and doing customer support, etc.

Perhaps we could have it sell Paperclips. With the sole goal of selling as many paperclips as possible.

Surely, selling something as innocuous as paperclips could never go wrong.

-

If AI ends up running companies better than people, won’t shareholders demand the switch? A board isn’t paying a CEO $20 million a year for tradition, they’re paying for results. If an AI can do the job cheaper and get better returns, investors will force it.

And since corporations are already treated as “people” under the law, replacing a human CEO with an AI isn’t just swapping a worker for a machine, it’s one “person” handing control to another.

That means CEOs would eventually have to replace themselves, not because they want to, but because the system leaves them no choice. And AI would be considered a "person" under the law.

wrote last edited by [email protected]All of you are missing the point.

CEOs and The Board are the same people. The majority of CEOs are board members at other companies, and vice-versa. It's a big fucking club and you ain't in it.

Why would they do this to themselves?

Secondly, we already have AI running companies. You think some CEOs and Board Members aren't already using this shit bird as a god? Because they are

-

If AI ends up running companies better than people, won’t shareholders demand the switch? A board isn’t paying a CEO $20 million a year for tradition, they’re paying for results. If an AI can do the job cheaper and get better returns, investors will force it.

And since corporations are already treated as “people” under the law, replacing a human CEO with an AI isn’t just swapping a worker for a machine, it’s one “person” handing control to another.

That means CEOs would eventually have to replace themselves, not because they want to, but because the system leaves them no choice. And AI would be considered a "person" under the law.

that's already broadly discussed . there's tons of articles like this one. just use your favorite search engine for "ceos replaced by ai",

-

I could imagine a world where whole virtual organizations could be spun up, and they can just run in the background creating whole products, marketing them, and doing customer support, etc.

Right now the technology doesn't seem there yet, but it has been rapidly improving, so we'll see.

I could definitely see rich CEOs funding the creation of a "celebrity" bot that answers questions the way they do. Maybe with their likeness and voice, so they can keep running companies from beyond the grave. Throw it in one of those humanoid robots and they can keep preaching the company mission until the sun burns out.

What a nightmare.

I have been having this vision you described for quite some time now.

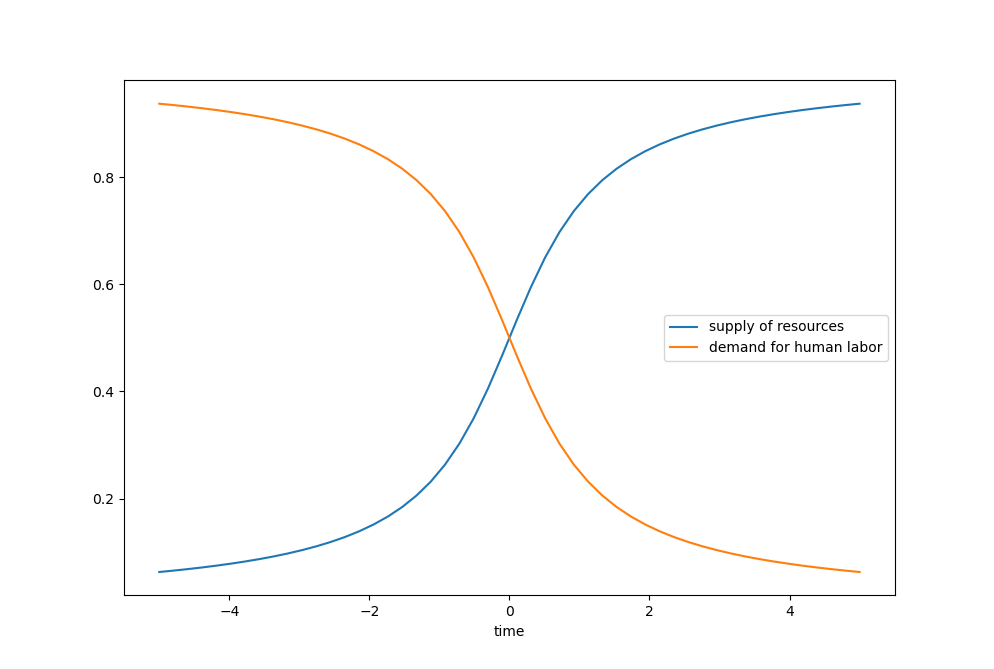

As time progresses, availability of resources on earth increases because we learn to process and collect them more efficiently; but on the other hand, number of jobs (or, demand for human labor) decreases continuously, because more and more work gets automated.

So, if you'd draw a diagram, it would look something like this:

X-axis is time. As we progress into the future, that completely changes the game. Instead of being a society that is driven by a constant shortage of resources and a constant lack of workers (causing a high demand for workers and a lot of jobs), it'd be a society with a shortage of jobs (and therefore meaningful employment), but with an abundance of resources. What do we do with such a world?

-

Current Ai has no shot of being as smart as humans, it's simply not sophisticated enough.

And that's not to say that current llms aren't impressive, they are, but the human brain is just on a whole different level.

And just to think about on a base level, LLM inference can run off a few gpus, roughly order of 100 billion transistors. That's roughly on par with the number of neurons, but each neuron has an average of 10,000 connections, that are capable of or rewiring themselves to new neurons.

And there are so many distinct types of neurons, with over 10,000 unique proteins.

On top of there over a hundred neurotransmitters, and we're not even sure we've identified them all.

And all of that is still connected to a system that integrates all of our senses, while current AI is pure text, with separate parts bolted onto it for other things.

Current Ai has no shot of being as smart as humans, it’s simply not sophisticated enough.

you know what's also not very sophisticated? the chemistry periodic table. yet all variety of life (of which there is plenty) is based on it.

-

It’s amazing how quickly people dismiss technological capabilities as mundane that would have been miraculous just a few years earlier.

yep, and it's also amazing how people think new technologies are impossible, until they happen.

https://en.wikipedia.org/wiki/Flying_Machines_Which_Do_Not_Fly

"Flying Machines Which Do Not Fly" is an editorial published in the New York Times on October 9, 1903. The article incorrectly predicted it would take one to ten million years for humanity to develop an operating flying machine.[1] It was written in response to Samuel Langley's failed airplane experiment two days prior. Sixty-nine days after the article's publication, American brothers Orville and Wilbur Wright successfully achieved the first heavier-than-air flight on December 17, 1903, at Kitty Hawk, North Carolina.

-

All of you are missing the point.

CEOs and The Board are the same people. The majority of CEOs are board members at other companies, and vice-versa. It's a big fucking club and you ain't in it.

Why would they do this to themselves?

Secondly, we already have AI running companies. You think some CEOs and Board Members aren't already using this shit bird as a god? Because they are

They would do it because the big investors--not randos with a 401k in an index fund, but big hedge funds--demand that AI leads the company. This could potentially be forced at a stockholder meeting without the board having much say.

I don't think it will happen en masse for a different reason, though. The real purpose of the CEO isn't to lead the company, but to take the fall when everything goes wrong. Then they get a golden parachute and the company finds someone else. When AI fails, you can "fire" the model, but are you going to want to replace it with a different model? Most likely, the shareholders will reverse course and put a human back in charge. Then they can fire the human again later.

A few high profile companies might go for it. Then it will go badly and nobody else will try.

-

Current Ai has no shot of being as smart as humans, it’s simply not sophisticated enough.

you know what's also not very sophisticated? the chemistry periodic table. yet all variety of life (of which there is plenty) is based on it.

wrote last edited by [email protected]At its face value, base elements are not enormously complicated. But we can't even properly model any element other than hydrogen, it's all approximations because quantum mechanics is so complicated. And then there's molecules, that are even more hopelessly complicated, and we haven't even gotten to proteins!

By comparison our best transistors look like toys. -

I could imagine a world where whole virtual organizations could be spun up, and they can just run in the background creating whole products, marketing them, and doing customer support, etc.

Perhaps we could have it sell Paperclips. With the sole goal of selling as many paperclips as possible.

Surely, selling something as innocuous as paperclips could never go wrong.

Certainly the CEOs will patiently ensure guardrails are in place before chasing a ROI. Right? ... Right?

Uh oh..

-

Well you will be happy to hear that AI does make calculated risks but they are not based on reality so they are in fact - risks.

You can't just type "Please do not hallucinate. Do not make judgement calls based on fake news"

I'm not sure quite how it relates to what I said. Maybe we are looking at the word risk differently. Let me give an easy example that shows what I think normally is hidden because of complexity.

Five CEOs are faced with the same opportunity to invest heavily in a make or break deal. They either succeed or they go bus, iif they do it. This investment, for one reason or another, only have one winner (because we are simplifying a complex real world problem). All five CEOs invest, four go bust and one wins big. In this simplified example, the one winning CEO would be seen as a great CEO. After all, he did great. The reasonable decision would have been to not invest, but that doesn't make you a great CEO that can move on to better, greener jobs or cash out huge bonuses. No-one remembers the reasonable CEO that made expected gains without unneeded risks.

-

If AI ends up running companies better than people, won’t shareholders demand the switch? A board isn’t paying a CEO $20 million a year for tradition, they’re paying for results. If an AI can do the job cheaper and get better returns, investors will force it.

And since corporations are already treated as “people” under the law, replacing a human CEO with an AI isn’t just swapping a worker for a machine, it’s one “person” handing control to another.

That means CEOs would eventually have to replace themselves, not because they want to, but because the system leaves them no choice. And AI would be considered a "person" under the law.

Things get real crazy when the shareholders are replaced by AI.