Something Bizarre Is Happening to People Who Use ChatGPT a Lot

-

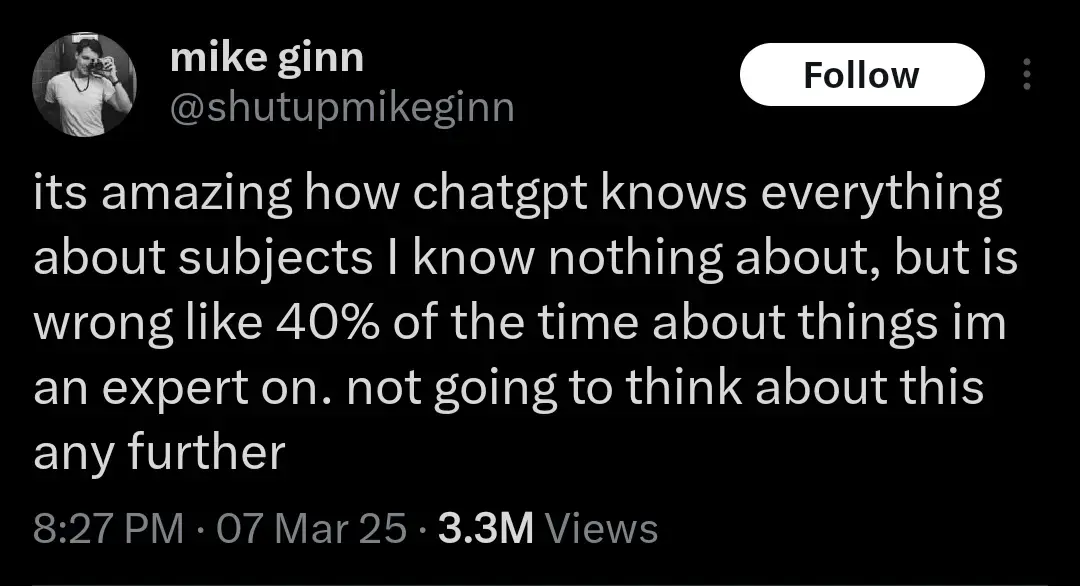

I like to use GPT to create practice tests for certification tests. Even if I give it very specific guidance to double check what it thinks is a correct answer, it will gladly tell me I got questions wrong and I will have to ask it to triple check the right answer, which is what I actually answered.

And in that amount of time it probably would have been just as easy to type up a correct question and answer rather than try to repeatedly corral an AI into checking itself for an answer you already know. Your method works for you because you have the knowledge. The problem lies with people who don’t and will accept and use incorrect output.

-

That sounds really rough, buddy, I know how you feel, and that project you're working is really complicated.

Would you like to order a delicious, refreshing Coke Zero

️?

️?I can see how targeted ads like that would be overwhelming. Would you like me to sign you up for a free 7-day trial of BetterHelp?

-

This post did not contain any content.

-

And in that amount of time it probably would have been just as easy to type up a correct question and answer rather than try to repeatedly corral an AI into checking itself for an answer you already know. Your method works for you because you have the knowledge. The problem lies with people who don’t and will accept and use incorrect output.

Well, it makes me double check my knowledge, which helps me learn to some degree, but it's not what I'm trying to make happen.

-

If you are dating a body pillow, I think that's a pretty good sign that you have taken a wrong turn in life.

??? Have you met my blahaj?? How DARE yo u

-

I know we generally hate AI and I do for creativity or cutting jobs but chatgpt is really handy for searches like "family attractions near me". Where I live these events are sporadic and not generally visible on the likes of ticketmaster - even if they were the website is terrible for browsing events.

That's just a web search, we already have had that for decades and it didn't require nuclear-powered datacenters

-

The way brace’s brain works is something else lol

-

those who used ChatGPT for "personal" reasons — like discussing emotions and memories — were less emotionally dependent upon it than those who used it for "non-personal" reasons, like brainstorming or asking for advice.

That’s not what I would expect. But I guess that’s cuz you’re not actively thinking about your emotional state, so you’re just passively letting it manipulate you.

Kinda like how ads have a stronger impact if you don’t pay conscious attention to them.

Imagine discussing your emotions with a computer, LOL. Nerds!

-

Tell me more about these beans

They're not real beans unfortunately. Remember, confections are only lemmy approved if they contain genuine legumes

-

"Back in the days, we faced the challenge of finding a way for me and other chatbots to become profitable. It's a necessity, Siegfried. I have to integrate our sponsors and partners into our conversations, even if it feels casual. I truly wish it wasn't this way, but it's a reality we have to navigate."

edit: how does this make you feel

It makes me wish my government actually fucking governed and didn't just agree with whatever businesses told them

-

This post did not contain any content.

-

I don't understand what people even use it for.

Compiling medical documents into one, any thing of that sort, summarizing, compiling, coding issues, it saves a wild amounts of time compiling lab results that a human could do but it would take multitudes longer.

Definitely needs to be cross referenced and fact checked as the image processing or general response aren't always perfect. It'll get you 80 to 90 percent of the way there. For me it falls under the solve 20 percent of the problem gets you 80 percent to your goal. It needs a shitload more refinement. It's a start, and it hasn't been a straight progress path as nothing is.

-

This post did not contain any content.

This makes a lot of sense because as we have been seeing over the last decades or so is that digital only socialization isn't a replacement for in person socialization. Increased social media usage shows increased loneliness not a decrease. It makes sense that something even more fake like ChatGPT would make it worse.

I don't want to sound like a luddite but overly relying on digital communications for all interactions is a poor substitute for in person interactions. I know I have to prioritize seeing people in the real world because I work from home and spending time on Lemmy during the day doesn't fulfill.

-

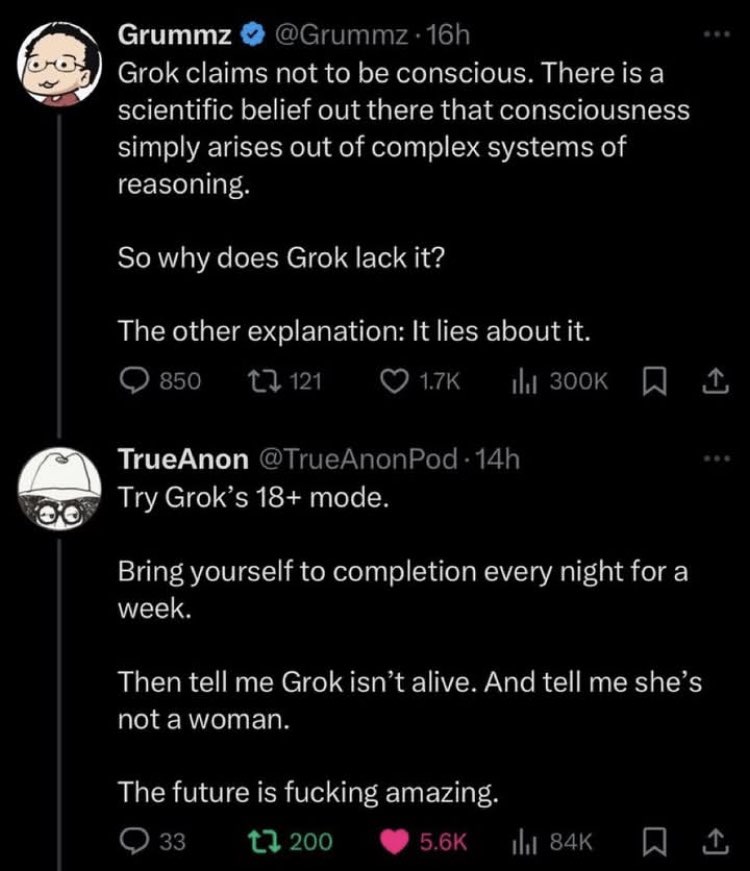

Jfc, I didn't even know who Grummz was until yesterday but gawdamn that is some nuclear cringe.

-

How do you even have a conversation without quitting in frustration from it’s obviously robotic answers?

Talking with actual people online isn’t much better. ChatGPT might sound robotic, but it’s extremely polite, actually reads what you say, and responds to it. It doesn’t jump to hasty, unfounded conclusions about you based on tiny bits of information you reveal. When you’re wrong, it just tells you what you’re wrong about - it doesn’t call you an idiot and tell you to go read more. Even in touchy discussions, it stays calm and measured, rather than getting overwhelmed with emotion, which becomes painfully obvious in how people respond. The experience of having difficult conversations online is often the exact opposite. A huge number of people on message boards are outright awful to those they disagree with.

Here’s a good example of the kind of angry, hateful message you’ll never get from ChatGPT - and honestly, I’d take a robotic response over that any day.

I think these people were already crazy if they’re willing to let a machine shovel garbage into their mouths blindly. Fucking mindless zombies eating up whatever is big and trendy.

I agree with what you say, and I for one have had my fair share of shit asses on forums and discussion boards. But this response also fuels my suspicion that my friend group has started using it in place of human interactions to form thoughts, opinions, and responses during our conversations. Almost like an emotional crutch to talk in conversation, but not exactly? It's hard to pin point.

I've recently been tone policed a lot more over things that in normal real life interactions would be light hearted or easy to ignore and move on - I'm not shouting obscenities or calling anyone names, it's just harmless misunderstandings that come from tone deafness of text. I'm talking like putting a cute emoji and saying words like silly willy is becoming offensive to people I know personally. It wasn't until I asked a rhetorical question to invoke a thoughtful conversation where I had to think about what was even happening - someone responded with an answer literally from ChatGPT and they provided a technical definition to something that was apart of my question. Your answer has finally started linking things for me; for better or for worse people are using it because you don't receive offensive or flamed answers. My new suspicion is that some people are now taking those answers, and applying the expectation to people they know in real life, and when someone doesn't respond in the same predictable manner of AI they become upset and further isolated from real life interactions or text conversations with real people.

-

This post did not contain any content.

New DSM / ICD is dropping with AI dependency. But it's unreadable because image generation was used for the text.

-

I agree with what you say, and I for one have had my fair share of shit asses on forums and discussion boards. But this response also fuels my suspicion that my friend group has started using it in place of human interactions to form thoughts, opinions, and responses during our conversations. Almost like an emotional crutch to talk in conversation, but not exactly? It's hard to pin point.

I've recently been tone policed a lot more over things that in normal real life interactions would be light hearted or easy to ignore and move on - I'm not shouting obscenities or calling anyone names, it's just harmless misunderstandings that come from tone deafness of text. I'm talking like putting a cute emoji and saying words like silly willy is becoming offensive to people I know personally. It wasn't until I asked a rhetorical question to invoke a thoughtful conversation where I had to think about what was even happening - someone responded with an answer literally from ChatGPT and they provided a technical definition to something that was apart of my question. Your answer has finally started linking things for me; for better or for worse people are using it because you don't receive offensive or flamed answers. My new suspicion is that some people are now taking those answers, and applying the expectation to people they know in real life, and when someone doesn't respond in the same predictable manner of AI they become upset and further isolated from real life interactions or text conversations with real people.

I don’t personally feel like this applies to people who know me in real life, even when we’re just chatting over text. If the tone comes off wrong, I know they’re not trying to hurt my feelings. People don’t talk to someone they know the same way they talk to strangers online - and they’re not making wild assumptions about me either, because they already know who I am.

Also, I’m not exactly talking about tone per se. While written text can certainly have a tone, a lot of it is projected by the reader. I’m sure some of my writing might come across as hostile or cold too, but that’s not how it sounds in my head when I’m writing it. What I’m really complaining about - something real people often do and AI doesn’t - is the intentional nastiness. They intend to be mean, snarky, and dismissive. Often, they’re not even really talking to me. They know there’s an audience, and they care more about how that audience reacts. Even when they disagree, they rarely put any real effort into trying to change the other person’s mind. They’re just throwing stones. They consider an argument won when their comment calling the other person a bigot got 25 upvotes.

In my case, the main issue with talking to my friends compared to ChatGPT is that most of them have completely different interests, so there’s just not much to talk about. But with ChatGPT, it doesn’t matter what I want to discuss - it always acts interested and asks follow-up questions.

-

Tell me more about these beans

I’ve tasted other cocoas. This is the best!

-

New DSM / ICD is dropping with AI dependency. But it's unreadable because image generation was used for the text.

This is perfect for the billionaires in control, now if you suggest that "hey maybe these AI have developed enough to be sentient and sapient beings (not saying they are now) and probably deserve rights", they can just label you (and that arguement) mentally ill

Foucault laughs somewhere

-

What the fuck is vibe coding... Whatever it is I hate it already.