Handful of users claim new Nvidia GPUs are melting power cables again

-

c13 plug

Who would've thought.... 3DFX was apparently ahead of its time...

-

I really appreciate the specificity of the headline, rather than the clickbait it could have been.

-

Better would have been:

Handful of users complain about 3rd Party Cables Melting Their 5090FE.

-

Yeah my 3090 K|ngP|n pulls over 500w easily, but that's over 3 8 pin PCIe cables, all dedicated. Power delivery was something I took seriously when getting that card installed, as well as cooling. Made sure my 1300w PSU had plenty of dedicated PCIe ports.

-

Is this the fabled Bitchin' Fast 3d?

-

As it so happens around a decade ago there was period when they tried to make Graphics Cards more energy efficient rather than just more powerful, so for example the GTX 1050 Ti which came out in 2017 had a TDP of 75W.

Of course, people had to actually "sacrifice" themselves by not having 200 fps @ 4K in order to use a lower TDP card.

(Curiously your 300W GTX780 had all of 21% more performance than my 75W GTX1050 Ti).

Recently I upgraded my graphics card and again chose based on, amongst other things TDP, and my new one (whose model I don't remember right now) has a TDP of 120W (I looked really hard and you can't find anything decent with a 75W TDP) and, of course, it will never give me top of the range performance when playing games (as it so happens it's mostly Terraria at the moment, so "top of the range" graphics performance would be an incredible waste for it) as I could get from something 4x the price and power consumption.

When I was looking around for that upgrade there were lots of higher performance cards around the 250W TDP mark.

All this to say that people chosing 300W+ cards can only blame themselves for having deprioritized power consumption so much in their choice.

-

Well, only a handful of users exist.

-

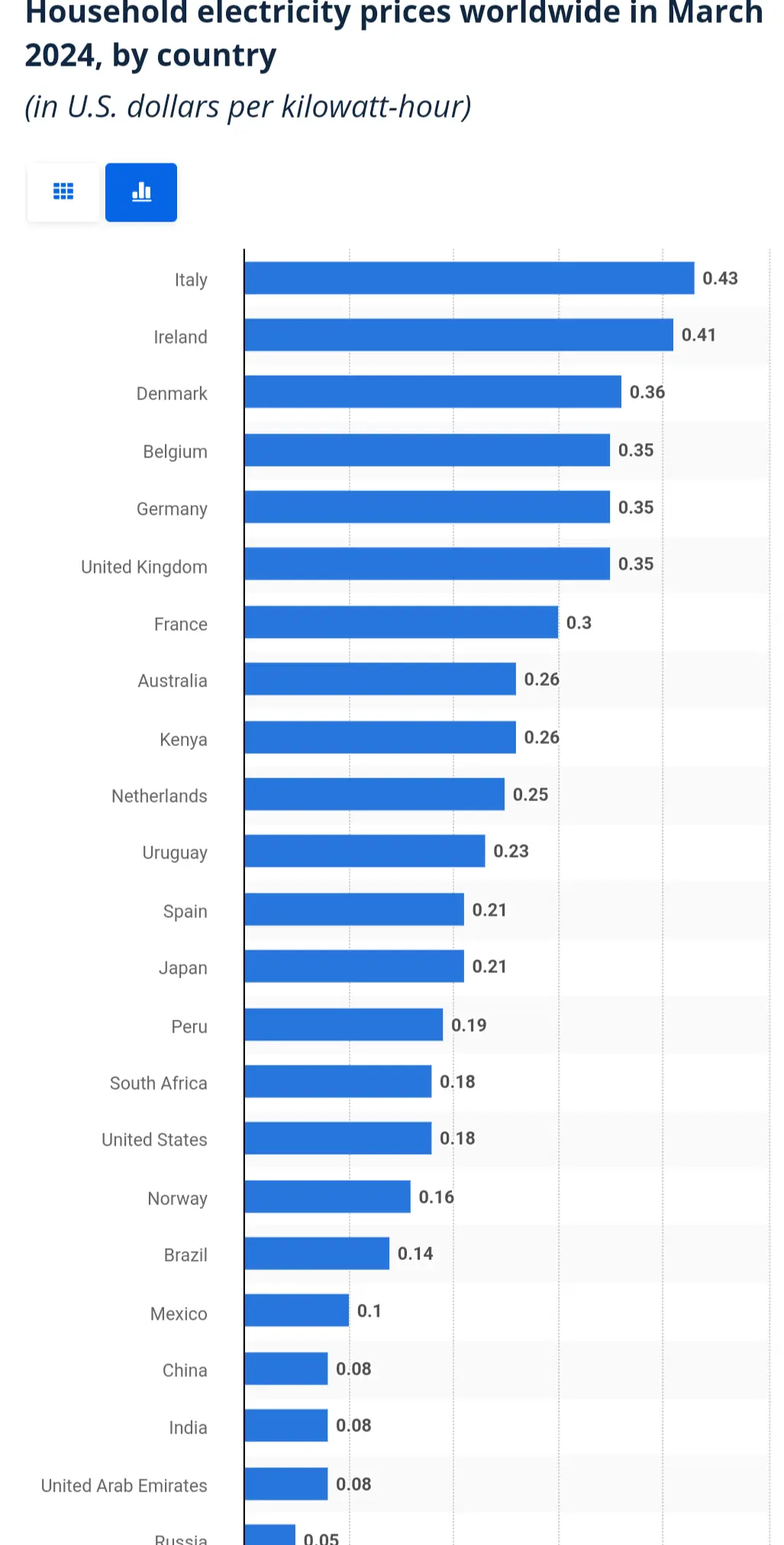

10¢ a kWh is fucking cheap in a global context. 3x that is not uncommon.

https://www.statista.com/statistics/263492/electricity-prices-in-selected-countries/

-

1080ti days were just simpler times!

-

I never get the newest thing, people who buy brand new stuff are just beta testers for the product imo

-

I can really recommend both Der 8auers video and Buildzoids video on this. Der 8auer has good thermal imaging of imbalanced current between the wires between two 12V HPWR plugs, and Buildzoid has the explanation why the current can't be balanced with the current setup on 5090s.

-

Most expensive beta test ever for consumer-grade GPUs.

-

The problem seems to be load balancing, or lack thereof. A German guy on YouTube noticed that his cable got up to 150°C at the PSU end, due to one wire delivering 20 or 22 amps, while the others were getting a lot less pumped through them. 22 Ampere is pretty much half the power draw of the card, through one wire instead of three if the load was properly balanced between them. That's why it ran so hot and melted to shit.

-

The 8800 Ultra was 170 watts in 06

The GTX 280 was 230 in 08.

The GTX 480/580 was 250 in 2010. But then we got the GTX 590 dual GPU which more or less doubled

The 680 was a drop, but then they added the TIs/Titans and that brought us back up to high TDP flagships.

These cards have always been high for the time, but quickly that became normalized. Remember when 95 watt CPUs were really high? Yeah that's a joke compared to modern CPUs. My laptops CPU draws 95 watts.

-

High end GPUs are always pushed just past their peak efficiency. If you slightly underclock and undervolt them you can see some incredible performance per watt.

I have a 4090 that's underclocked as low as it will go (0.875v on the core, more or less stock speeds) and it only draws about 250 watts while still providing like 80%+ the performance of the card stock. I had an undervolt that went to about 0.9 or 0.925v on the core with a slight overclock and I got stock speeds at about 300 watts. Heavy RT will make the consumption spike to closer to the 450 watt TDP, but that just puts me back at the same performance as not underclocked because the card was already downclocking to those speeds. About 70 of that 250 watts is my vram so it could scale a bit better if I found the right sweet spot.

My GTX 1080 before that was under volted, but left at maybe 5% less than stock clocks and it went from 180w to 120 or less.

-

And it burns, burns, burns

The gpu of fire

The gpu of fire -

So, when is the first party cable going to be launched?

-

Every picture I've seen has been an outside pin. So my theory is it's the cable getting tugged for cable management and even though it's clipped in, it's not making as good of contact.

That or just a bad cable design. I've bought a few cables from cablemod and I'm not happy with the wiring they used. Their website says "Crafted with 16AWG wiring" but they also brag about the flexibility of their cables so I assume they're using stranded wires instead of a solid core so you lose a decent chunk of ampacity (and heat sinking).

-

https://youtu.be/Ndmoi1s0ZaY?si=SWRjC4AgseXSmOrm

https://youtu.be/kb5YzMoVQyw?si=eSGnUbFv6OJ4jtEg

It's poor design on Nvidias part. There is no load balancing.

-

You probably mean der8auer?