Why I am not impressed by A.I.

-

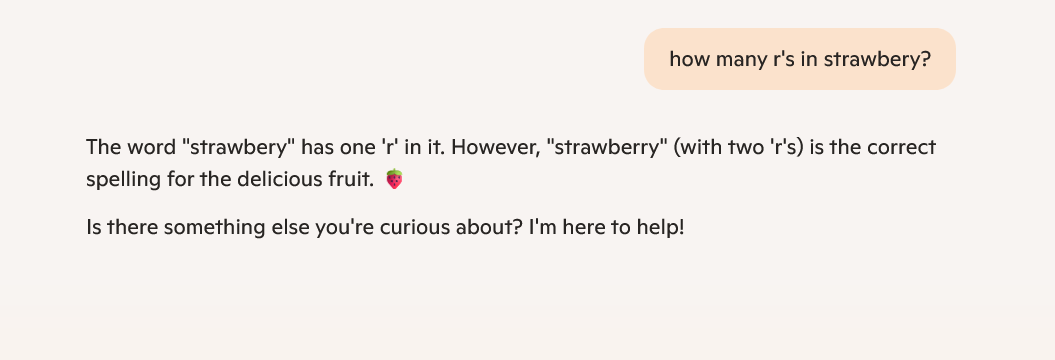

Fair enough - sounds like they might not be ready for prime time though.

Oh well, at least while the bugs get ironed-out we're not using them for anything important

-

And apparently, they apparently still can't get an accurate result with such a basic query.

And yet...

https://futurism.com/openai-signs-deal-us-government-nuclear-weapon-security -

-

But to be fair, as people we would not ask "how many Rs does strawberry have", but "with how many Rs do you spell strawberry" or "do you spell strawberry with 1 R or 2 Rs"

-

-

They are not random per se. They are just statistical with just some degree of randomization.

-

Exactly. The naming of the technology would make you assume it's intelligent. It's not.

-

-

-

-

Sure, but I definitely wouldn’t confidently answer “two”.

-

I have thought a lot on it. The LLM per se would not know if the question is answerable or not, as it doesn't know if their output is good of bad.

So there's various approach to this issue:

-

The classic approach, and the one used for censoring: keywords. When the llm gets a certain key word or it can get certain keyword by digesting a text input then give back a hard coded answer. Problem is that while censoring issues are limited. Hard to answer questions are unlimited, hard to hard code all.

-

Self check answers. For everything question the llm could process it 10 times with different seeds. Then analyze the results and see if they are equivalent. If they are not then just answer that it's unsure about the answer. Problem: multiplication of resource usage. For some questions like the one in the post, it's possible than the multiple randomized answers give equivalent results, so it would still have a decent failure rate.

-

-

Why would it not know? It certainly “knows” that it’s an LLM and it presumably “knows” how LLMs work, so it could piece this together if it was capable of self-reflection.

-

I’ve been avoiding this question up until now, but here goes:

Hey Siri …

- how many r’s in strawberry? 0

- how many letter r’s in the word strawberry? 10

- count the letters in strawberry. How many are r’s? ChatGPT …..2

-

It doesn't know shit. It's not a thinking entity.

-

I get that it's usually just a dunk on AI, but it is also still a valid demonstration that AI has pretty severe and unpredictable gaps in functionality, in addition to failing to properly indicate confidence (or lack thereof).

People who understand that it's a glorified autocomplete will know how to disregard or prompt around some of these gaps, but this remains a litmus test because it succinctly shows you cannot trust an LLM response even in many "easy" cases.

-

Creating software is a great example, actually. Coding absolutely requires reasoning. I’ve tried using code-focused LLMs to write blocks of code, or even some basic YAML files, but the output is often unusable.

It rarely makes syntax errors, but it will do things like reference libraries that haven’t been imported or hallucinate functions that don’t exist. It also constantly misunderstands the assignment and creates something that technically works but doesn’t accomplish the intended task.

-

Precisely, it's not capable of self-reflection, thinking, or anything of the sort. It doesn't even understand the meaning of words

-

But the problem is more "my do it all tool randomly fails at arbitrary tasks in an unpredictable fashion" making it hard to trust as a tool in any circumstances.

-

But everyone selling llms sells them as being able to solve any problem, making it hard to know when it's going to fail and give you junk.