DeepSeek's AI breakthrough bypasses industry-standard CUDA, uses assembly-like PTX programming instead

-

This post did not contain any content.

-

T [email protected] shared this topic

T [email protected] shared this topic

-

This sounds like good engineering, but surely there's not a big gap with their competitors. They are spending tens of millions on hardware and energy, and this is something a handful of (very good) programmers should be able to pull off.

Unless I'm missing something, It's the sort of thing that's done all the time on console games.

-

I think more like was done all the time for console games. These days that doesn't happen as much anymore as far as I know. But I think this shows that CUDA is not a good enough abstraction for modern GPUs or the compilers are not as good as expected. There should be no way they got that much optimization out of hand written/optimized code these days.

-

They said this is close to metal. Wake me up when they've achieved metal.

-

What is amazing in this case is that they achieved spending a fraction of the inference cost that OpenAI is paying.

Plus they are a lot cheaper too. But I am pretty sure that the American government will ban them in no time, citing national security concerns, etc.

Nevertheless, I think we need more open source models.

Not to mention that NVIDIA also needs to be brought to earth.

-

Even if they get banned, any startup could replicate their work if it is truly open source. The best thing about their solution is that it breaks the CUDA monopoly that NVDA has enjoyed. Buy your puts when NVDA bounces because that stock is GOING DOWN. There’s no world where a company that makes GPU’s is worth more than both Apple and Microsoft. It’s inevitable.

-

Reminds me of the Bitcoin mining and how askii miners overtook graphic card mining practically overnight. It would not surprise me if this goes the same way.

-

This is why Nvidia stock has been hit so hard. CUDA is their moat

-

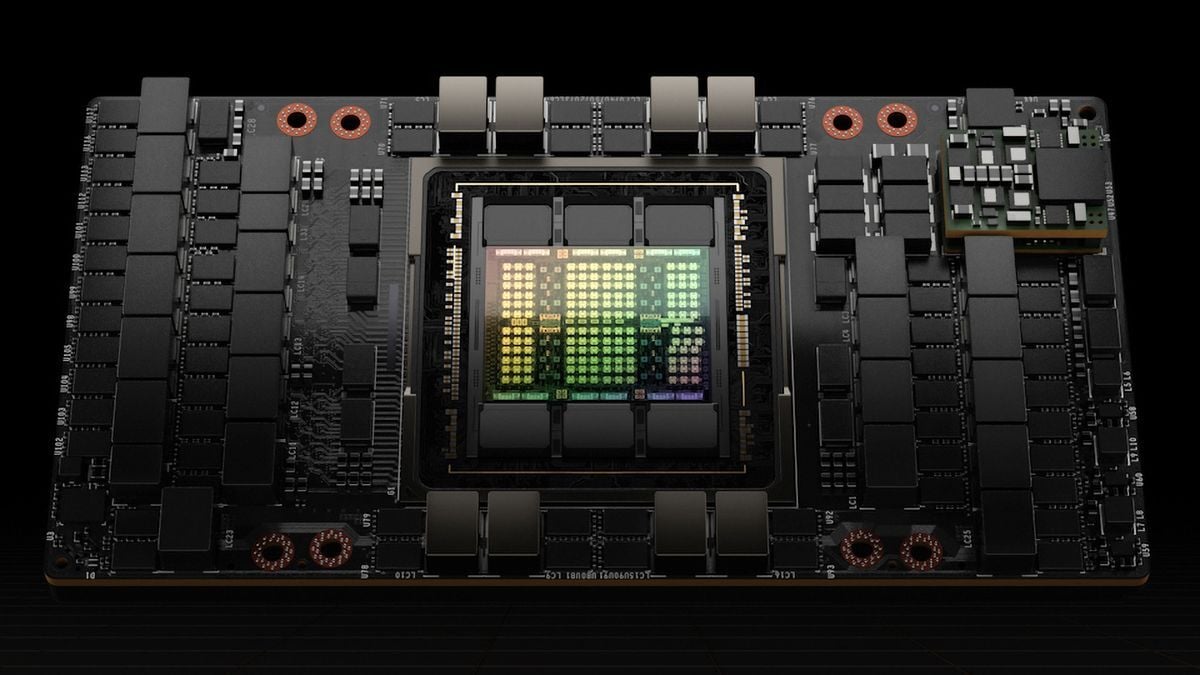

There seems to be some confusion here on what PTX is -- it does not bypass the CUDA platform at all. Nor does this diminish NVIDIA's monopoly here. CUDA is a programming environment for NVIDIA GPUs, but many say CUDA to mean the C/C++ extension in CUDA (CUDA can be thought of as a C/C++ dialect here.) PTX is NVIDIA specific, and sits at a similar level as LLVM's IR. If anything, DeepSeek is more dependent on NVIDIA than everyone else, since PTX is tightly dependent on their specific GPUs. Things like ZLUDA (effort to run CUDA code on AMD GPUs) won't work. This is not a feel good story here.

-

Never forget kids the market can stay irrational much longer than you can stay solvent.

-

True.

Thats why I tend to make small plays instead of being an absolute degenerate gambler. -

I don't think anyone is saying CUDA as in the platform, but as in the API for higher level languages like C and C++.

-

Some commenters on this post are clearly not aware of PTX being a part of the CUDA environment. If you know this, you aren't who I'm trying to inform.

-

aah I see them now

-

I wish that was true, but this doesn't threaten any monopoly

-

It certainly does.

Until last week, you absolutely NEEDED an NVidia GPU equipped with CUDA to run all AI models.

Today, that is simply not true. (watch the video at the end of this comment)I watched this video and my initial reaction to this news was validated and then some: this video made me even more bearish on NVDA.

-

This specific tech is, yes, nvidia dependent. The game changer is that a team was able to beat the big players with less than 10 million dollars. They did it by operating at a low level of nvidia's stack, practically machine code. What this team has done, another could do. Building for AMD GPU ISA would be tough but not impossible.

-

mate, that means they are using PTX directly. If anything, they are more dependent to NVIDIA and the CUDA platform than anyone else.

-

you absolutely NEEDED an NVidia GPU equipped with CUDA

-

Ahh. Thanks for this insight.