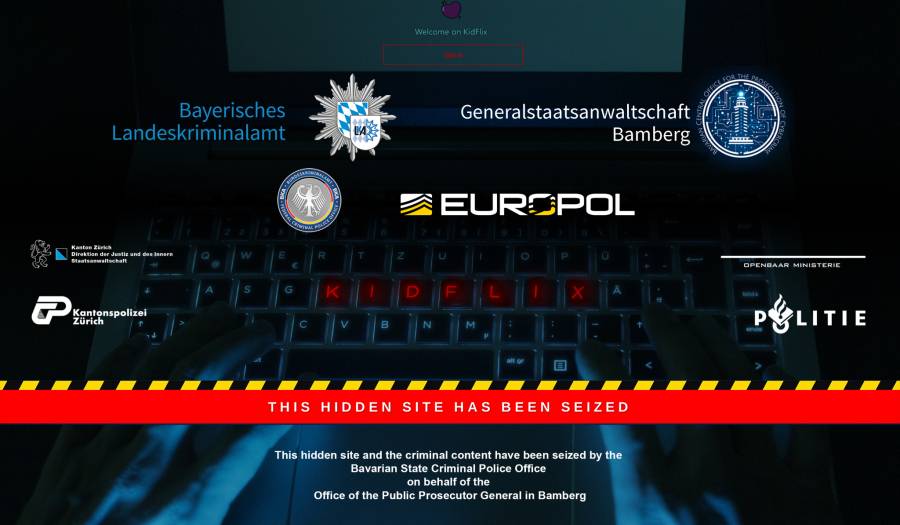

European police say KidFlix, "one of the largest pedophile platforms in the world," busted in joint operation.

-

it says “this hidden site”, meaning it was a site on the dark web.

Not just on the dark web (which technically is anything not indexed by search engines) but hidden sites are specifically a TOR thing (though Freenet/Hyphanet has something similar but it's called something else). Usually a TOR hidden site has a URL that ends in .onion and the TOR protocol has a structure for routing .onion addresses.

-

They have to deal with old men masturbating to them getting raped online.

The moment it was posted to wherever they were going to have to deal with that forever. It's not like they can ever know for certain that every copy of it ever made has been deleted.

-

eventually they want to move on to the real thing, as porn is not satisfying them anymore.

Isn't this basically the same argument as arguing violent media creates killers?

-

...and most of the people who agree with that notion would also consider reading Lemmy to be "trawling dark waters" because it's not a major site run by a massive corporation actively working to maintain advertiser friendliness to maximize profits. Hell, Matrix is practically Lemmy-adjacent in terms of the tech.

-

Understand you’re not advocating for it, but I do take issue with the idea that AI CSAM will prevent children from being harmed. While it might satisfy some of them (at first, until the high from that wears off and they need progressively harder stuff), a lot of pedophiles are just straight up sadistic fucks and a real child being hurt is what gets them off. I think it’ll just make the “real” stuff even more valuable in their eyes.

-

Geez, two million? Good riddance. Great job everyone!

-

Maybe Jeff Bezos will write an article about him and editorialize about "personal liberty". I have to keep posting this because every day another MAGA/lover - religious bigot or otherwise pretend upstanding community member is indicted or arrested for heinous acts against women and children.

-

No judge would authorise a honeypot that runs for multiple years, hosting original child abuse material meaning that children are actively being abused to produce content for it. That would be an unspeakable atrocity. A few years ago the Australian police seized a similar website and ran it for a matter of weeks to gather intelligence and even that was considered too far for many.

-

none of this applies to the comment they cited as an example of defending pedophilia.

-

I feel the same way. I've seen the argument that it's analogous to violence in videogames, but it's pretty disingenuous since people typically play videogames to have fun and for escapism, whereas with CSAM the person seeking it out is doing so in bad faith. A more apt comparison would be people who go out of their way to hurt animals.

-

Most cases of "we can't find anyone good for this job" can be solved with better pay. Make your opening more attractive, then you'll get more applicants and can afford to be picky.

Getting the money is a different question, unless you're willing to touch the sacred corporate profits....

-

Lmfao as I stated, they said that physical sexual abuse "PROBABLY" harms kids but they have only done research into their voyeurism kink as it applies to children.

Go off defending pedos, though

-

They literally investigated specific time frames of their voyeurism kink in medieval times extensively, but couldn't be bothered to do the most basic of research that sex abuse is harmful to children.

-

"Clearly in their late teens," sure.

Obviously there's a difference with AI porn vs real, that's why I told you to search AI in the first place??? The convo isn't about AI porn, but AI porn uses images to seed their new images including CSAM

-

He wants children to full on watch adults have sex because he has a voyeurism kink. Solved that for you.