AI haters build tarpits to trap and trick AI scrapers that ignore robots.txt

-

That's the reason for the maze. These companies have multiple IP addresses and bots that communicate with each other.

They can go through multiple entries in the robot.txt file. Once they learn they are banned, they go scrape the old fashioned way with another IP address.

But if you create a maze, they just continually scrape useless data, rather than scraping data you don't want them to get.

-

if they are stupid and scrape serially. the AI can have one "thread" caught in the tar while other "threads" continues to steal your content.

with a ban they would have to keep track of what banned them to not hit it again and get yet another of their IP range banned.

-

-

-

-

Don't make me tap the sign

-

Banning IP ranges isn't going to work. A lot of these companies rent out home IP addresses.

Also the point isn't just protecting content, it's data poisoning.

-

The big search engine crawlers like googles or Microsoft's should respect your robots.txt file. This trick affects those who don't honor the file and just scrape your website even if you told it not to

-

so they will have threads caught in pit and other threads stealing content. not only did you waste time with a tar pit your content still gets stolen.

any scraper worth its salt, especially with LLMs, would have garbage detection of sorts, so poisoning the model is likely not effective. they likely have more resources than you so a few spinning threads is trivial. all the while your server still has to service all these requests for garbage that is likely ineffective wasting that bandwidth you have to pay for, cycles that can be better served actually doing somehthing, and your content STILL gets stolen.

-

Waiting for Apache or Nginx to import a robots.txt and send crawlers down a rabbit hole instead of trusting them.

-

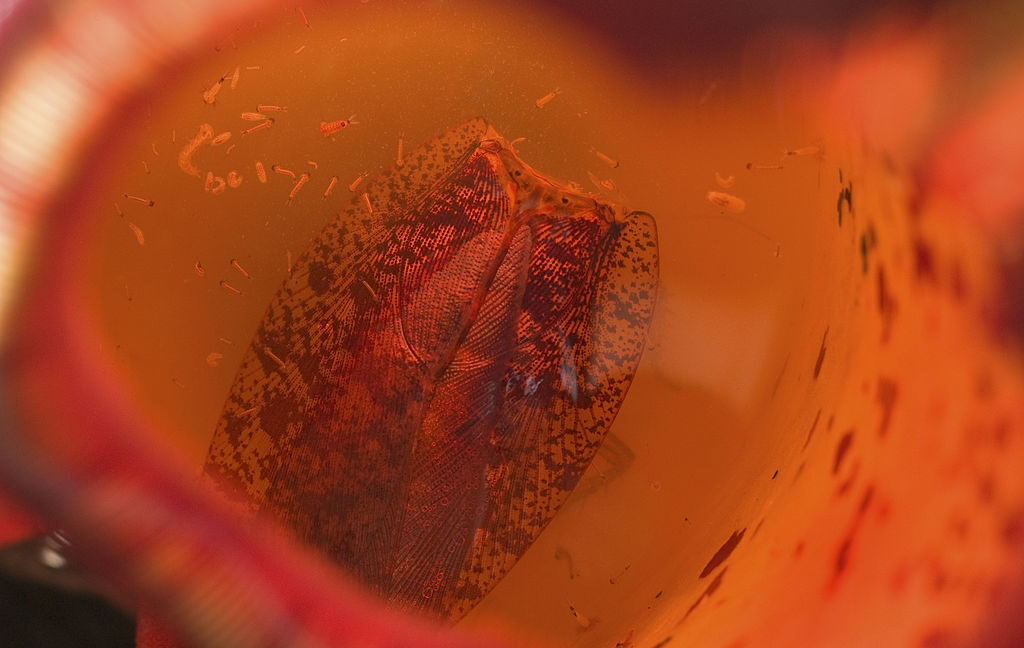

AI crawlers and sending them down an "infinite maze" of static files with no exit links, where they "get stuck"

Maybe against bad crawlers. If you know what you're trying to look for and just just trying to grab anything and everything this should not be very effective. Any good web crawler has limits. This seems to be targeted. This seems to be targeted at Facebooks apparently very dumb web crawler.

-

The way to get around it is respecting

robots.txtlol -

But that's not respecting the shareholders

-

Oh I love this!

-

Yeah I was just thinking... this is not at all how the tools work.

-

an infinite loop detector detects when you're going round in circles. They can't detect when you're going down an infinitely deep acyclic graph, because that, by definition doesn't have any loops for it to detect. The best they can do is just have a threshold after which they give up.

-

Until somebody sends that link to a user of your website and they get banned.

Could even be done with a hidden image on another website.

-

I am so gonna deploy this. I want the crawlers to index the entire Mandelbrot set.

-

You can detect pathpoints that come up repeatedly and avoid pursuing them further, which technically aren't called "infinite loop" detection.

-

It can detect cycles. From a quick look at the demo of this tool it (slowly) generates some garbage text after which it places 10 random links. Each of these links loops to a newly generated page. Thus although generating the same link twice will surely happen. The change that all 10 of the links have already been generated before is small