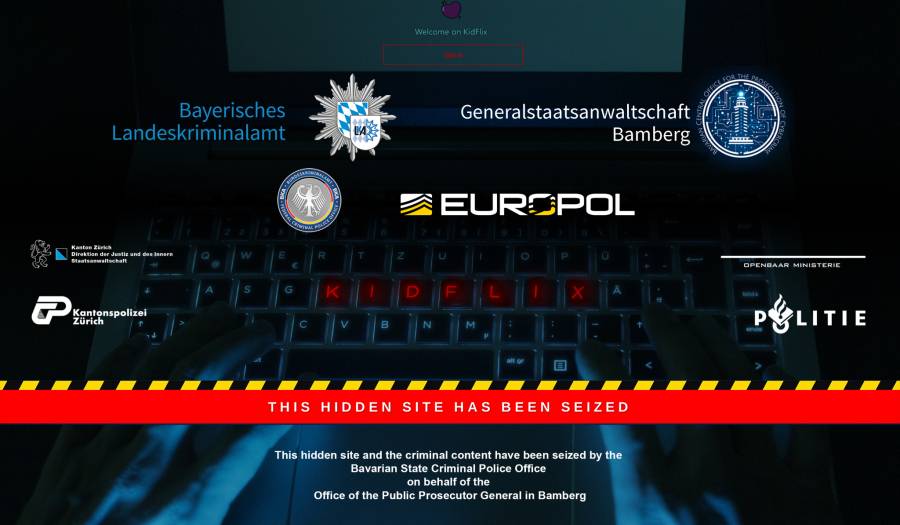

European police say KidFlix, "one of the largest pedophile platforms in the world," busted in joint operation.

-

Context is important I guess. So two things.

Is something illegal if it's not prosecuted?

Is it CSA if the kid is 9 but that's marrying age in that country?

If you answer yes, then no, then we'll not agree on this topic.

-

This platform used Tor. And because we want to protect privacy, they can make use of it.

-

I am not talking about CSA, I am talking about video material of CSA. Most countries with marriage ages that low have much more wide-spread bans on videos including sex of any kind.

As for prosecution, yes, it is still illegal if it is not prosecuted. There are many reasons not to prosecute something ranging all the way from resource and other means related concerns to intentionally turning a blind eye and only a small minority of them would lead that country to actively sabotage a major international investigation, especially after the trade-offs are considered (such as loss of international reputation by refusing to cooperate).

-

This particular platform used tor. It doesn't mean all platforms are using privacy centric anonymous networks. There are incidents with people using kik, Snapchat, Facebook and other clear net services to perform criminal actions such as drugs or cp.

-

Every now and again I am reminded of my sentiment that the introduction of "media" onto the Internet is a net harm. Maybe 256 dithered color photos like you'd see in Encarta 95 and that's the maximum extent of what should be allowed. There's just so much abuse from this kind of shit... despicable.

-

I just got ill

-

Let’s get rid of the printing press because it can be used for smut. /s

-

Raping kids has unfortunately been a thing since long before the internet. You could legally bang a 13 year old right up to the 1800s and in some places you still can.

As recently as the 1980s people would openly advocate for it to be legal, and remove the age of consent altogether. They'd get it in magazines from countries where it was still legal.

I suspect it's far less prevalent now than it's ever been. It's now pretty much universally seen as unacceptable, which is a good start.

-

It is easy to very feel disillusioned with the world, but it is important to remember that there are still good people all around willing to fight the good fight. And it is also important to remember that technology is not inherently bad, it is a neutral object, but people could use it for either good or bad purposes.

-

great pointless strawman. nice contribution.

-

It’s satire of your suggestion that we hold back progress but I guess it went over your head.

-

The youngest Playboy model, Eva Ionesco, was only 12 years old at the time of the photo shoot, and that was back in the late 1970’s... It ended up being used as evidence against the Eva’s mother (who was also the photographer), and she ended up losing custody of Eva as a result. The mother had started taking erotic photos (ugh) of Eva when she was only like 5 or 6 years old, under the guise of “art”. It wasn’t until the Playboy shoot that authorities started digging into the mother’s portfolio.

But also worth noting that the mother still holds copyright over the photos, and has refused to remove/redact/recall photos at Eva’s request. The police have confiscated hundreds of photos for being blatant CSAM, but the mother has been uncooperative in a full recall. Eva has sued the mother numerous times to try and get the copyright turned over, which would allow her to initiate the recall instead.

-

Here’s a reminder that you can submit photos of your hotel room to law enforcement, to assist in tracking down CSAM producers. The vast majority of sex trafficking media is produced in hotels. So being able to match furniture, bedspreads, carpet patterns, wallpaper, curtains, etc in the background to a specific hotel helps investigators narrow down when and where it was produced.

-

It doesn't though.

The most effective way to shut these forums down is to register bot accounts scraping links to the clearnet direct-download sites hosting the material and then reporting every single one.

If everything posted to these forums is deleted within a couple of days, their popularity would falter. And victims much prefer having their footage deleted than letting it stay up for years to catch a handful of site admins.

Frankly, I couldn't care less about punishing the people hosting these sites. It's an endless game of cat and mouse and will never be fast enough to meaningfully slow down the spread of CSAM.

Also, these sites don't produce CSAM themselves. They just spread it - most of the CSAM exists already and isn't made specifically for distribution.

-

I imagine it's easier to catch uploaders than viewers.

It's also probably more impactful to go for the big "power producers" simultaneously and quickly before word gets out and people start locking things down.

-

It definitely seems weird how easy it is to stumble upon CP online, and how open people are about sharing it, with no effort made, in many instances, to hide what they're doing. I've often wondered how much of the stuff is spread by pedo rings and how much is shared by cops trying to see how many people they can catch with it.

-

I once saw a list of defederated lemmy instances. In most cases, and I mean like 95% of them, the reason of thedefederation was pretty much in the instance name. CP everywhere. Humanity is a freaking mistake.